Instagram is rolling out two features to curb online bullying

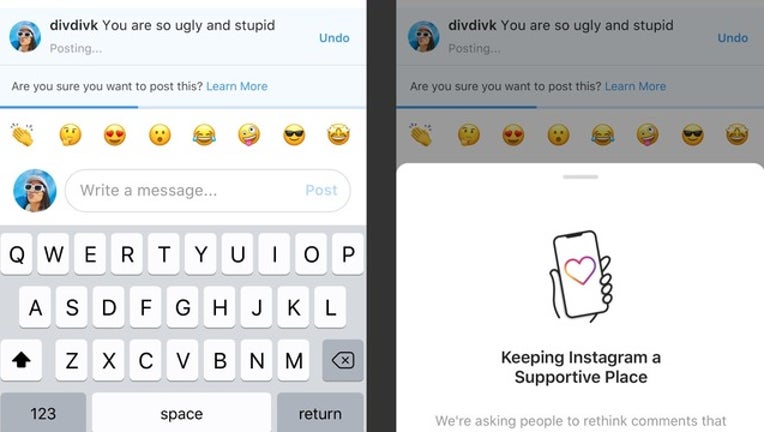

Instagram is rolling out a couple of features, one of which uses artificial intelligence to curb bullying on its platform. Photo: Instagram.

San Jose, Calif. - Internet platforms can often be a hostile space where some people say incredibly mean and abusive things, sometimes under pseudonymous profiles. Instagram said Monday it is rolling out a couple of changes on its platform to curb online bullying and make it more of a safe space for young people. These features were promised at Facebook’s F8 conference in late April 2019.

One of these features uses artificial intelligence to detect abusive and offensive language in user comments, and intervenes on behalf of the person writing the mean comment. It poses the question - “Are you sure you want to post this?”, and gives the commenter a chance to undo the comment.

“This intervention gives people a chance to reflect and undo their comment and prevents the recipient from receiving the harmful comment notification,” writes Adam Mosseri, Head of Instagram in a blog post published Monday. “From early tests of this feature, we have found that it encourages some people to undo their comment and share something less hurtful once they have had a chance to reflect,” he adds.

The second feature, called Restrict, gives more control in the hands of the user to curb bullying. Restricting a person makes their comments invisible to others, and the account holder can then choose to approve individual comments made by the restricted commenter. Restricted people are prevented from seeing if a user is active on Instagram, or see read receipts on direct messages. The Restrict feature was developed because young people are reluctant to block or unfollow a bully as it could escalate the situation, and invoke retaliation in real life, writes Mosseri.

The two features are still being rolled out, and were not live in the reporter’s account at the time of filing.

The changes come after several media reports on teens being bullied on Instagram. A 2017 survey by British anti-bullying organization Ditch The Label found that more people were bullied on Instagram than any other platform included in the survey.