AI project at UT Austin focuses on developing beneficial, ethical artificial intelligence

UT Austin project focuses on developing beneficial, ethical AI

While a lot of attention has been focused on ChatGPT, UT researchers are working on several other ways artificial intelligence can be used in schools.

AUSTIN, Texas - Artificial intelligence (AI) is here to stay, and it’s presenting new opportunities and challenges in the classroom.

"We're aware of all the amazing and wonderful things that A.I. can do," said Dr. Ken Fleischmann, a professor with the University of Texas at Austin’s School of Information. "But at the same time, there are lots of potential negative implications of AI."

Dr. Fleischmann leads the Good Systems project at UT, which he says focuses on developing A.I. that’s beneficial and ethical, while avoiding the pitfalls.

"Once the harm has been done, it can be really hard to undo that harm," said Fleischmann.

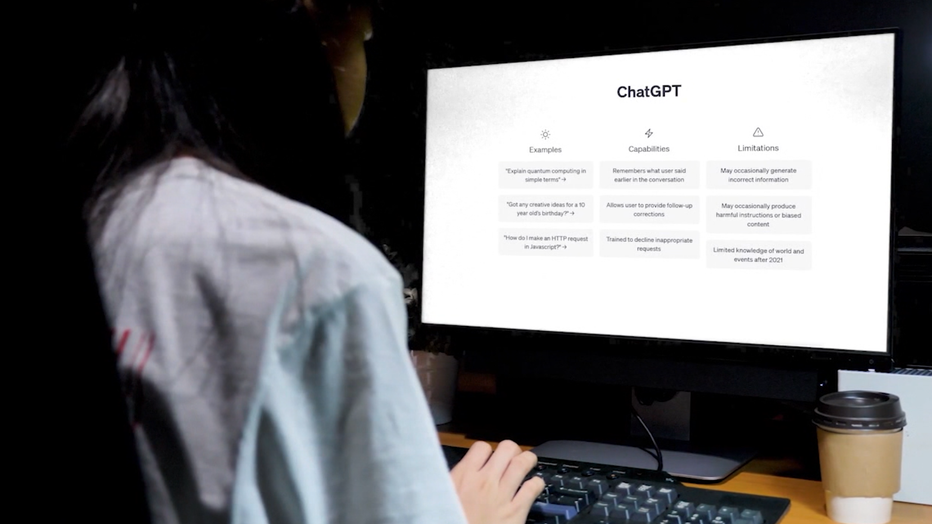

According to OpenAI's website, ChatGPT is a chatbot model that can interact with users in a conversational manner.

While a lot of attention has been focused on ChatGPT, which allows a user to input information and get a response, UT researchers are working on several other ways A.I. can be used in schools.

For example, one of the technologies being developed by Good Systems, "smart hand tools", could have benefits for career and technical education.

"We're partnering with Austin Community College on how A.I. can be used to speed up and make safer and more efficient training process for occupation, such as welding," said Fleischmann.

A.I. could also help students with different learning needs.

"A.I. tools could be used to make reading materials more accessible. This would be specifically for students who are blind or have low vision to make it easier to navigate library databases," said Fleischmann.

READ MORE

- Study finds ChatGPT struggles with math, unlikely to replace accountants

- John Legend calls for regulation on AI-generated music

- Misinformation machines? AI chatbots can spew falsehoods, even accuse people of crimes they never committed

- These jobs are safe from the AI revolution — for now

Doctoral candidate Nitin Verma is studying deepfakes, A.I.-generated videos that can impersonate people. Deepfakes have raised concerns in the world of politics, but they could actually be valuable in teaching history.

"You could, for example, have Abe Lincoln really appear to read out his famous speeches," said Verma. "Learning art, for example, with Salvador Dalí as your teacher."

As for concerns about tools like ChatGPT leading to increased cheating, Fleischmann says schools are developing systems to detect that—but he says it’s also a chance for teachers to simply develop more creative assignments.

"The more nuanced the instructions are, the better humans are at dealing with that, and the harder it will be for an A.I. at this stage," said Fleischmann.

MORE UT AUSTIN NEWS

- UT launches housing scholarship program to assist low-income students

- UT Austin professor recognized by USA Today for her work documenting anti-Mexican violence in Texas

- UT researchers name ancient beaver fossil after Buc-ee's

Verma argues the problem of cheating goes far beyond the technology itself.

"You could have cheated even in the pen and paper era. You could cheat until a couple of years ago with your laptop. That’s a more human problem," said Verma.

"There's definitely pros and cons," said UT freshman Malik Kaniga.

Kaniga hasn’t yet used A.I. for his college studies, but he’s pretty sure it’s coming.

"There's always like a scare of like, how lazy can we get to let A.I. do everything for us, as well as A.I. can teach us stuff that we didn't really know before," said Kaniga.

For more on the "ethical AI" work being done at UT, click here.

Elsewhere, at UT, researchers are working on a "brain activity decoder" that can reveal thoughts in people’s minds. To learn more about that, click here.